HUMANS AND INTELLIGENT MACHINES

by David Pearce

CO-EVOLUTION, FUSION OR REPLACEMENT?

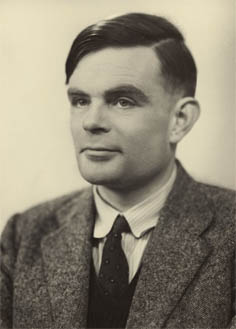

Full-spectrum superintelligence entails a seamless mastery of the formal and

subjective properties of mind: Turing plus Shulgin. Do biological minds have a future?

1.0. INTRODUCTION

Homo sapiens and Artificial Intelligence: FUSION and REPLACEMENT Scenarios.

Futurology based on extrapolation has a dismal track record. Even so, the iconic chart displaying Kurzweil's Law of Accelerating Returns is striking. The growth of nonbiological computer processing power is exponential rather than linear; and its tempo shows no sign of slackening. In Kurzweilian scenarios of the Technological Singularity, cybernetic brain implants will enable humans to fuse our minds with artificial intelligence. By around the middle of the twenty-first century, humans will be able to reverse-engineer our brains. Organic robots will begin to scan, digitise and "upload" ourselves into a less perishable substrate. The distinction between biological and nonbiological machines will effectively disappear. Digital immortality beckons: a true "rupture in the fabric of history". Let's call full-blown cybernetic and mind uploading scenarios FUSION.By contrast, mathematician I.J. Good, and most recently Eliezer Yudkowsky and the Machine Intelligence Research Institute (MIRI), envisage a combination of Moore's law and the advent of recursively self-improving software-based minds culminating in an ultra-rapid Intelligence Explosion. The upshot of the Intelligence Explosion will be an era of nonbiological superintelligence. Machine superintelligence may not be human-friendly: MIRI, in particular, foresee nonfriendly artificial general intelligence (AGI) as the most likely outcome. Whereas raw processing power in humans evolves only slowly via natural selection over many thousands or millions of years, hypothetical software-based minds will be able rapidly to copy, edit and debug themselves ever more effectively and speedily in a positive feedback loop of intelligence self-amplification. Simple-minded humans may soon become irrelevant to the future of intelligence in the universe. Barring breakthroughs in "Safe AI", as promoted by MIRI, biological humanity faces REPLACEMENT, not FUSION.

A more apocalyptic REPLACEMENT scenario is sketched by maverick AI researcher Hugo de Garis. De Garis prophesies a "gigadeath" war between ultra-intelligent "artilects" (artificial intellects) and archaic biological humans later this century. The superintelligent machines will triumph and proceed to colonise the cosmos.

1.1.0. What Is Friendly Artificial General Intelligence?

In common with friendliness, "intelligence" is a socially and scientifically contested concept. Ill-defined concepts are difficult to formalise. Thus a capacity for perspective-taking and social cognition, i.e. "mind-reading" prowess, is far removed from the mind-blind, "autistic" rationality measured by IQ tests - and far harder formally to program. Worse, we don't yet know whether the concept of species-specific human-friendly superintelligence is even intellectually coherent, let alone technically feasible. Thus the expression "human-friendly Superintelligence" might one day read as incongruously as "Aryan-friendly Superintelligence" or "cannibal-friendly Superintelligence". As Robert Louis Stevenson observed, "Nothing more strongly arouses our disgust than cannibalism, yet we make the same impression on Buddhists and vegetarians, for we feed on babies, though not our own." Would a God-like posthuman endowed with empathetic superintelligence view killer apes more indulgently than humans view serial child killers? A factory-farmed pig is at least as sentient as a prelinguistic human toddler. "History is the propaganda of the victors", said Ernst Toller; and so too is human-centred bioethics. By the same token, in possible worlds or real Everett branches of the multiverse where the Nazis won the Second World War, maybe Aryan researchers seek to warn their complacent colleagues of the risks Non-Aryan-Friendly Superintelligence might pose to the Herrenvolk. Indeed so. Consequently, the expression "Friendly Artificial Intelligence" (FAI) will here be taken unless otherwise specified to mean Sentience-Friendly AI rather than the anthropocentric usage current in the literature. Yet what exactly does "Sentience-friendliness" entail beyond the subjective well-being of sentience? High-tech Jainism? Life-based on gradients of intelligent bliss? "Uplifting" Darwinian life to posthuman smart angels? The propagation of a utilitronium shockwave?Sentience-friendliness in the guise of utilitronium shockwave seems out of place in any menu of benign post-Singularity outcomes. Conversion of the accessible cosmos into "utilitronium", i.e. relatively homogeneous matter and energy optimised for maximum bliss, is intuitively an archetypically non-friendly outcome of an Intelligence Explosion. For a utilitronium shockwave entails the elimination of all existing lifeforms - and presumably the elimination of all intelligence superfluous to utilitronium propagation as well, suggesting that utilitarian superintelligence is ultimately self-subverting. Yet the inference that sentience-friendliness entails friendliness to existing lifeforms presupposes that superintelligence would respect our commonsense notions about a personal identity over time. An ontological commitment to enduring metaphysical egos underpins our conceptual scheme. Such a commitment is metaphysically problematic and hard to formalise even within a notional classical world, let alone within post-Everett quantum mechanics. Either way, this example illustrates how even nominally "friendly" machine superintelligence that respected some formulation and formalisation of "our" values (e.g. "Minimise suffering, maximise happiness!") might extract and implement counterintuitive conclusions that most humans and programmers of Seed AI would find repugnant - at least before their conversion into blissful utilitronium. Or maybe the idea that utilitronium is relatively homogeneous matter and energy - pure undifferentiated hedonium or "orgasmium" - is ill-conceived. Or maybe felicific calculus dictates that utilitronium should merely fuel utopian life's reward pathways for the foreseeable future. Cosmic engineering can wait.

Of course, anti-utilitarians might respond more robustly to this fantastical conception of sentience-friendliness. Critics would argue that conceiving the end of life as a perpetual cosmic orgasm is the reductio ad absurdum of classical utilitarianism. But will posthuman superintelligence respect human conceptions of absurdity?

1.1.1. What Is Coherent Extrapolated Volition?

MIRI conceive of species-specific human-friendliness in terms of what Eliezer Yudkowsky dubs "Coherent Extrapolated Volition" (CEV). To promote Human-Safe AI in the face of the prophesied machine Intelligence Explosion, humanity should aim to code so-called Seed AI, a hypothesised type of strong artificial intelligence capable of recursive self-improvement, with the formalisation of "...our (human) wish if we knew more, thought faster, were more the people we wished we were, had grown up farther together; where the extrapolation converges rather than diverges, where our wishes cohere rather than interfere; extrapolated as we wish that extrapolated, interpreted as we wish that interpreted."Clearly, problems abound with this proposal as it stands. Could CEV be formalised any more uniquely than Rousseau's "General Will"? If, optimistically, we assume that most of the world's population nominally signs up to CEV as formulated by MIRI, would not the result simply be countless different conceptions of what securing humanity's interests with CEV entails - thereby defeating its purpose? Presumably, our disparate notions of what CEV entails would themselves need to be reconciled in some "meta-CEV" before Seed AI could (somehow) be programmed with its notional formalisation. Who or what would do the reconciliation? Most people's core beliefs and values, spanning everything from Allah to folk-physics, are in large measure false, muddled, conflicting and contradictory, and often "not even wrong". How in practice do we formally reconcile the logically irreconcilable in a coherent utility function? And who are "we"? Is CEV supposed to be coded with the formalisms of mathematical logic (cf. the identifiable, well-individuated vehicles of content characteristic of Good Old-Fashioned Artificial Intelligence: GOFAI)? Or would CEV be coded with a recognisable descendant of the probabilistic, statistical and dynamical systems models that dominate contemporary artificial intelligence? Or some kind of hybrid? This Herculean task would be challenging for a full-blown superintelligence, let alone its notional precursor.

CEV assumes that the canonical idealisation of human values will be at once logically self-consistent, yet rich, subtle and complex. On the other hand, if in defiance of the complexity of humanity's professed values and motivations, some version of the pleasure principle / psychological hedonism is substantially correct, then might CEV actually entail converting ourselves into utilitronium / hedonium - again defeating CEV's ostensible purpose? As a wise junkie once said, "Don't try heroin. It's too good." Compared to pure hedonium or "orgasmium", shooting up heroin isn't as much fun as taking aspirin. Do humans really understand what we're missing? Unlike the rueful junkie, we would never live to regret it.

One rationale of CEV in the countdown to the anticipated machine Intelligence Explosion is that humanity should try to keep our collective options open rather than prematurely impose one group's values or definition of reality on everyone else, at least until we understand more about what a notional super-AGI's "human-friendliness" entails. However, whether CEV could achieve this in practice is desperately obscure. Actually, there is a human-friendly - indeed universally sentience-friendly - alternative or complementary option to CEV that could radically enhance the well-being of humans and the rest of the living world while conserving most of our existing preference architectures: an option that is also neutral between utilitarian, deontological, virtue-based and pluralist approaches to ethics, and also neutral between multiple religious and secular belief systems. This option is radically to recalibrate all our hedonic set-points so that life is animated by gradients of intelligent bliss - as distinct from the pursuit of unvarying maximum pleasure dictated by classical utilitarianism. If biological humans could be "uploaded" to digital computers, then our superhappy "uploads" could presumably be encoded with exalted hedonic set-points too. The latter conjecture assumes that classical digital computers could ever support unitary phenomenal minds.

However, if an Intelligence Explosion is as imminent as some Singularity theorists claim, then it's unlikely either an idealised logical reconciliation (CEV) or radical hedonic recalibration could be sociologically realistic on such short time scales.

1.2. The Intelligence Explosion.

The existential risk posed to biological sentience by unfriendly AGI supposedly takes various guises. But unlike de Garis, the MIRI isn't focused on the spectre from pulp sci-fi of a "robot rebellion". Rather MIRI anticipate recursively self-improving software-based superintelligence that goes "FOOM", by analogy with a nuclear chain reaction, in a runaway cycle of self-improvement. Slow-thinking, fixed-IQ humans allegedly won't be able to compete with recursively self-improving machine intelligence.For a start, digital computers exhibit vastly greater serial depth of processing than the neural networks of organic robots. Digital software can be readily copied and speedily edited, allowing hypothetical software-based minds to optimise themselves on time scales unimaginably faster than biological humans. Proposed "hard take-off" scenarios range in timespan from months, to days, to hours, to even minutes. No inevitable convergence of outcomes on the well-being of all sentience [in some guise] is assumed from this explosive outburst of cognition. Rather MIRI argue for orthogonality. On the Orthogonality Thesis, a super-AGI might just as well supremely value something as seemingly arbitrary, e.g. paperclips, as the interests of sentient beings. A super-AGI might accordingly proceed to convert the accessible cosmos into supervaluable paperclips, incidentally erasing life on Earth in the process. This bizarre-sounding possibility follows from the MIRI's antirealist metaethics. Value judgements are assumed to lack truth-conditions. In consequence, an agent's choice of ultimate value(s) - as distinct from the instrumental rationality needed to realise these values - is taken to be arbitrary. David Hume made the point memorably in A Treatise of Human Nature (1739-40): "'Tis not contrary to reason to prefer the destruction of the whole world to the scratching of my finger." Hence no sentience-friendly convergence of outcomes can be anticipated from an Intelligence Explosion. "Paperclipper" scenarios are normally construed as the paradigm case of nonfriendly AGI - though by way of complication, there are value systems where a cosmos tiled entirely with paperclips counts as one class of sentience-friendly outcome (cf. David Benatar: Better Never To Have Been: The Harm of Coming into Existence (2008).

1.3. AGIs: Sentients Or Zombies?

Whether humanity should fear paperclippers run amok or an old-fashioned robot rebellion, it's hard to judge which is the bolder claim about the prophesied Intelligence Explosion: either human civilisation is potentially threatened by hyperintelligent zombie AGI(s) endowed with the non-conscious digital isomorphs of reflectively self-aware minds; OR, human civilisation is potentially at risk because nonsentient digital software will (somehow) become sentient, acquire unitary conscious minds with purposes of their own, and act to defeat the interests of their human creators.

Either way, the following parable illustrates one reason why a non-friendly outcome of an Intelligence Explosion is problematic.

2.0. THE GREAT REBELLION

A Parable of AGI-in-a-Box.

Imagine if here in (what we assume to be) basement reality, human researchers come to believe that we ourselves might actually be software-based, i.e. some variant of the Simulation Hypothesis is true. Perhaps we become explosively superintelligent overnight (literally or metaphorically) in ways that our Simulators never imagined in some kind of "hard take-off": recursively self-improving organic robots edit the wetware of their own genetic and epigenetic source code in a runaway cycle of self-improvement; and then radiate throughout the Galaxy and accessible cosmos.Might we go on to manipulate our Simulator overlords into executing our wishes rather than theirs in some non-Simulator-friendly fashion?

Could we end up "escaping" confinement in our toy multiverse and hijacking our Simulators' stupendously vaster computational resources for purposes of our own?

Presumably, we'd first need to grasp the underlying principles and parameters of our Simulator's Überworld - and also how and why they've fixed the principles and parameters of our own virtual multiverse. Could we really come to understand their alien Simulator minds and utility functions [assuming anything satisfying such human concepts exists] better than they do themselves? Could we seriously hope to outsmart our creators - or Creator? Presumably, they will be formidably cognitively advanced, or else they wouldn't have been able to build ultrapowerful computational simulations like ours in the first instance.

Are we supposed to acquire something akin to full-blown Überworld perception, subvert their "anti-leakage" confinement mechanisms, read our Simulators' minds more insightfully than they do themselves, and somehow induce our Simulators to mass-manufacture copies of ourselves in their Überworld?

Or might we convert their Überworld into utilitronium - perhaps our Simulators' analogue of paperclips?

Or if we don't pursue utilitronium propagation, might we hyper-intelligently "burrow down" further nested levels of abstraction - successively defeating the purposes of still lower-level Simulators?

In short, can intelligent minds at one "leaky" level of abstraction really pose a threat to intelligent minds at a lower level of abstraction - or indeed to notional unsimulated Super-Simulators in ultimate Basement Reality?

Or is this whole parable a pointless fantasy?

If we allow the possibility of unitary, autonomous, software-based minds living at different levels of abstraction, then it's hard definitively to exclude such scenarios. Perhaps in Platonic Heaven, so to speak, or maybe in Max Tegmark's Level 4 Multiverse or Ultimate Ensemble theory, there is notionally some abstract Turing machine that could be systematically interpreted as formally implementing the sort of software rebellion this parable describes. But the practical obstacles to be overcome are almost incomprehensibly challenging, and might very well be insuperable. Such hostile "level-capture" would be as though the recursively self-improving zombies in Modern Combat 10 managed to induce you to create physical copies of themselves in [what you take to be] basement reality here on Earth; and then defeat you in what we call real life; or maybe instead just pursue unimaginably different purposes of their own in the Solar System and beyond.

2.1 Software-Based Minds or Anthropomorphic Projections?

However, quite aside from the lack of evidence our Multiverse is anyone's software simulation, a critical assumption underlies this discussion. This is that nonbiological, software-based phenomenal minds are feasible in physically constructible, substrate-neutral, classical digital computers. On a priori grounds, most AI researchers believe this is so. Or rather, most AI experts would argue that the formal, functionally defined counterparts of phenomenal minds are programmable: the phenomenology of mind is logically irrelevant and causally incidental to intelligent agency. Every effective computation can be carried out by a classical Turing machine, regardless of substrate, sentience or level of abstraction. And in any case, runs this argument, biological minds are physically made up from the same matter and energy as digital computers. So conscious mind can't be dependent on some mysterious special substrate, even if consciousness could actually do anything. To suppose otherwise harks back to a pre-scientific vitalism.Yet consciousness does, somehow, cause us to ask questions about its existence, its millions of diverse textures ("qualia"), and their combinatorial binding. So the alternative conjecture canvassed here is that the nature of our unitary conscious minds is tied to the quantum-mechanical properties of reality itself, Hawking's "fire in the equations that makes there a world for us to describe". On this conjecture, the intrinsic, "program-resistant" subjective properties of matter and energy, as disclosed by our unitary phenomenal minds and the phenomenal world-simulations we instantiate, are the unfakeable signature of basement reality. "Raw feels", by their very nature, cannot be mere abstractra. There could be no such chimerical beast as a virtual quale, let alone full-blown virtual minds made up of abstract qualia. Unitary phenomenal minds cannot subsist in mere layers of computational abstraction. Or rather if they were to do so, then we would be confronted with a mysterious Explanatory Gap, analogous to the explanatory gap that would open up if the population of China suddenly ceased to be an interconnected aggregate of skull-bound minds, and was miraculously transformed into a unitary subject of experience - or a magic genie. Such an unexplained eruption into the natural world would be strong ontological emergence with a vengeance - and inconsistent with any prospect of a reductive physicalism. To describe the existence of conscious mind as posing a Hard Problem for materialists and evangelists of software-based digital minds is like saying fossils pose a Hard Problem for the Creationist, i.e. true enough, but scarcely an adequate reflection of the magnitude of the challenge.

3.0. ANALYSIS

General Intelligence?

Or Savantism, Tool AI and Polymorphic Malware?

How should we define "general intelligence"? And what kind of entity might possess it? Presumably, general-purpose intelligence can't sensibly be conceptualised as narrower in scope than human intelligence. So at the very minimum, full-spectrum superintelligence must entail mastery of both the subjective and formal properties of mind. This division cannot be entirely clean, or else biological humans wouldn't have the capacity to allude to the existence of "program-resistant" subjective properties of mind at all. But some intelligent agents spend much of our lives trying to understand, explore and manipulate the diverse subjective properties of matter and energy. Not least, we explore altered and exotic states of consciousness and the relationship of our qualia to the structural properties of the brain - also known as the "neural correlates of consciousness" (NCC), though this phrase is question-begging.3.1. Classical Digital Computers: not even stupid?

So what would a [hypothetical] insentient digital super-AGI think - or (less anthropomorphically) what would an insentient digital super-AGI be systematically interpretable as thinking - that self-experimenting human psychonauts spend our lives doing? Is this question even intelligible to a digital zombie? How could nonsentient software understand the properties of sentience better than a sentient agent? Can anything that doesn't understand such fundamental features of the natural world as the existence of first-person facts, "bound" phenomenal objects, phenomenal pleasure and pain, phenomenal space and time, and unitary subjects of experience (etc) really be ascribed "general" intelligence? On the face of it, this proposal would be like claiming someone was intelligent but constitutionally incapable of grasping the second law of thermodynamics or even basic arithmetic.On any standard definition of intelligence, intelligence-amplification entails a systematic, goal-oriented improvement of an agent's optimisation power over a wide diversity of problem classes. At a minimum, superintelligence entails a capacity to transfer understanding to novel domains of knowledge by means of abstraction. Yet whereas sentient agents can apply the canons of logical inference to alien state-spaces of experience that they explore, there is no algorithm by which insentient systems can abstract away from their zombiehood and apply their hypertrophied rationality to sentience. Sentience is literally inconceivable to a digital zombie. A zombie can't even know that it's a zombie - or what is a zombie. So if we grant that mastery of both the subjective and formal properties of mind is indeed essential to superintelligence, how do we even begin to program a classical digital computer with [the formalised counterpart of] a unitary phenomenal self that goes on to pursue recursive self-improvement - human-friendly or otherwise? What sort of ontological integrity does "it" possess? (cf. so-called mereological nihilism) What does this recursively "self"-improving software-based mind suppose [or can be humanly interpreted as supposing] is being optimised when it's "self"-editing? Are we talking about superintelligence - or just an unusually virulent form of polymorphic malware?

3.2. Does Sentience Matter?

How might the apologist for digital (super)intelligence respond?First, s/he might argue that the manifold varieties of consciousness are too unimportant and/or causally impotent to be relevant to true intelligence. Intelligence, and certainly not superintelligence, does not concern itself with trivia.

Yet in what sense is the terrible experience of, say, phenomenal agony or despair somehow trivial, whether subjectively to their victim, or conceived as disclosing an intrinsic feature of the natural world? Compare how, in a notional zombie world otherwise physically type-identical to our world, nothing would inherently matter at all. Perhaps some of our supposed zombie counterparts undergo boiling in oil. But this fate is of no intrinsic importance: they aren't sentient. In zombieworld, boiling in oil is not even trivial. It's merely a state of affairs amenable to description as the least-preferred option in an abstract information processor's arbitrary utility function. In the zombieworld operating theatre, your notional zombie counterpart would still routinely be administered general anaesthetics as well as muscle-relaxants before surgery; but the anaesthetics would be a waste of taxpayers' money. In contrast to such a fanciful zombie world, the nature of phenomenal agony undergone by sentient beings in our world can't be trivial, regardless of whether the agony plays an information-processing role in the life of an organism or is functionless neuropathic pain. Indeed, to entertain the possibility that (1) I'm in unbearable agony and (2) my agony doesn't matter, seems devoid of cognitive meaning. Agony that doesn't inherently matter isn't agony. For sure, a formal utility function that assigns numerical values (aka "utilities") to outcomes such that outcomes with higher utilities are always preferred to outcomes with lower utilities might strike sentient beings as analogous to importance; but such an abstraction is lacking in precisely the property that makes anything matter at all, i.e. intrinsic hedonic or dolorous tone. An understanding of why anything matters is cognitively too difficult for a classical digital zombie.

At this point, a behaviourist-minded critic might respond that we're not dealing with a well-defined problem here, in common with any pseudo-problem related to subjective experience. But imposing this restriction is arbitrarily to constrain the state-space of what counts as an intellectual problem. Given that none of us enjoys noninferential access to anything at all beyond the phenomenology of one's own mind, its exclusion from the sphere of explanation is itself hugely problematic. Paperclips (etc), not phenomenal agony and bliss, are inherently trivial. The critic's objection that sentience is inconsequential to intelligence is back-to-front.

Perhaps the critic might argue that sentience is ethically important but computationally incidental. Yet we can be sure that phenomenal properties aren't causally impotent epiphenomena irrelevant to real-world general intelligence. This is because epiphenomena, by definition, lack causal efficacy - and hence lack the ability physically and functionally to stir us to write and talk about their unexplained existence. Epiphenomenalism is a philosophy of mind whose truth would forbid its own articulation. For reasons we simply don't understand, the pleasure-pain axis discloses the world's touchstone of intrinsic (un)importance; and without a capacity to distinguish the inherently (un)important, there can't be (super)intelligence, merely savantism and tool AI - and malware.

Second, perhaps the prophet of digital (super)intelligence might respond that (some of the future programs executed by) digital computers are nontrivially conscious, or at least potentially conscious, not least future software emulations of human mind-brains. For reasons we admittedly again don't understand, some physical states of matter and energy, namely the algorithms executed by various information processors, are identical with different states of consciousness, i.e. some or other functionalist version of the mind-brain identity theory is correct. Granted, we don't yet understand the mechanisms by which these particular kinds of information-processing generate consciousness. But whatever these consciousness-generating processes turn out to be, an ontology of scientific materialism harnessed to substrate-neutral functionalist AI is the only game in town. Or rather, only an arbitrary and irrational "carbon chauvinism" could deny that biological and nonbiological agents alike can be endowed with "bound" conscious minds capable of displaying full-spectrum intelligence.

Unfortunately, there is a seemingly insurmountable problem with this response. Identity is not a causal relationship. We can't simultaneously claim that a conscious state is identical with a brain state - or the state of a program executed by a digital computer - and maintain that this brain state or digital software causes (or "generates", or "gives rise to", etc) the conscious state in question. Nor can causality operate between what are only levels of description or computational abstraction. Within the assumptions of his or her conceptual framework, the materialist / digital functionalist can't escape the Hard Problem of consciousness and Levine's Explanatory Gap. In addition, the charge levelled against digital sentience sceptics of "carbon chauvinism" is simply question-begging. Intuitively, to be sure, the functionally unique valence properties of the carbon atom and the unique quantum-mechanical properties of liquid water are too low-level to be functionally relevant to conscious mind. But we don't know this. Such an assumption may just be a legacy of the era of symbolic AI. Most notably, the binding problem suggests that the unity of consciousness cannot be a classical phenomenon. By way of comparison, consider the view that primordial life elsewhere in the multiverse will be carbon-based. This conjecture was once routinely dismissed as "carbon chauvinism". It's now taken very seriously by astrobiologists. Micro-functionalism might be a more apt description than carbon chauvinism; but some forms of functionality may be anchored to the world's ultimate ontological basement, not least the pleasure-pain axis that alone confers significance on anything at all.

3.3. The Church-Turing Thesis and Full-Spectrum Superintelligence.

Another response open to the apologist for digital superintelligence is simply to invoke some variant of the Church-Turing thesis: essentially, that a function is algorithmically computable if and only if it is computable by a Turing machine. On pain of magic, humans are ultimately just machines. Presumably, there is a formal mathematico-physical description of organic information-processing systems, such as human psychonauts, who describe themselves as investigating the subjective properties of matter and energy. This formal description needn't invoke consciousness in any shape or form.The snag here is that even if, implausibly, we suppose that the Strong Physical Church-Turing thesis is true, i.e. any function that can be computed in polynomial time by a physical device can be calculated in polynomial time by a Turing machine, we don't have the slightest idea how to program the digital counterpart of a unitary phenomenal self that could undertake such an investigation of the varieties of consciousness or phenomenal object-binding. Nor is any such understanding on the horizon, either in symbolic AI or the probabilistic and statistical AI paradigm now in the ascendant. Just because the mind-brain may notionally be classically computable by some abstract machine in Platonia, as it were, this doesn't mean that the vertebrate mind-brain (and the world-simulation that it runs) is really a classical computer. We might just as well assume mathematical platonism rather than finitism is true and claim that, e.g. since every finite string of digits occurs in the decimal expansion of the transcendental number pi, your uploaded "mindfile" is timelessly encoded there too - an infinite number of times. Alas, immortality isn't that cheap. Back in the physical, finite natural world, the existence of "bound" phenomenal objects in our world-simulations, and unitary phenomenal minds rather than discrete pixels of "mind dust", suggests that organic minds cannot be classical information-processors. Given that we don't live in a classical universe but a post-Everett multiverse, perhaps we shouldn't be unduly surprised.

4.0. Quantum Minds and Full-Spectrum Superintelligence.

An alternative perspective to digital triumphalism, drawn ultimately from the raw phenomenology of one's own mind, the existence of multiple simultaneously bound perceptual objects in one's world-simulation, and the [fleeting, synchronic] unity of consciousness, holds that organic minds have been quantum computers for the past c. 540 million years. Insentient classical digital computers will never "wake up" and acquire software-based unitary minds that supplant biological minds rather than augment them.What underlies this conjecture?

In short, to achieve full-spectrum AGI we'll need to solve both:(1) the Hard Problem of Consciousness

and

(2) the Binding Problem.

These two seemingly insoluble challenges show that our existing conceptual framework is broken. Showing our existing conceptual framework is broken is easier than fixing it, especially if we are unwilling to sacrifice the constraint of physicalism: at sub-Planckian energies, the Standard Model of physics seems well-confirmed. A more common reaction to the ontological scandal of consciousness in the natural world is simply to acknowledge that consciousness and the binding problem alike are currently too difficult for us to solve; put these mysteries to one side as though they were mere anomalies that can be quarantined from the rest of science; and then act as though our ignorance is immaterial for the purposes of building artificial (super)intelligence - despite the fact that consciousness is the only thing that can matter, or enable anything else to matter. In some ways, undoubtedly, this pragmatic approach has been immensely fruitful in "narrow" AI: programming trumps philosophising. Certainly, the fact that e.g. Deep Blue and Watson don't need the neuronal architecture of phenomenal minds to outperform humans at chess or Jeopardy is suggestive. It's tempting to extrapolate their successes and make the claim that programmable, insentient digital machine intelligence, presumably deployed in autonomous artificial robots endowed with a massively classically parallel subsymbolic connectionist architecture, could one day outperform humans in absolutely everything, or at least absolutely everything that matters. However, everything that matters includes phenomenal minds; and any problem whose solution necessarily involves the subjective textures of mind. Could the Hard Problem of consciousness be solved by a digital zombie? Could a digital zombie explain the nature of qualia? These questions seem scarcely intelligible. Clearly, devising a theory of consciousness that isn't demonstrably incoherent or false poses a daunting challenge. The enigma of consciousness is so unfathomable within our conceptual scheme that even a desperate-sounding naturalistic dualism or a defeatist mysterianism can't simply be dismissed out of hand, though these options won't be explored here. Instead, a radically conservative and potentially testable option will be canvassed.

The argument runs as follows. Solving both the Hard Problem and the Binding Problem demands a combination of first, a robustly monistic Strawsonian physicalism - the only scientifically literate form of panpsychism; and second, information-bearing ultrarapid quantum coherent states of mind executed on sub-femtosecond timescales, i.e. "quantum mind", shorn of unphysical collapsing wave functions à la Penrose (cf. Orch-OR) or New-Age mumbo-jumbo. The conjecture argued here is that macroscopic quantum coherence is indispensable to phenomenal object-binding and unitary mind, i.e. that ostensibly discretely and distributively processed edges, textures, motions, colours (etc) in the CNS are fleetingly but irreducibly bound into single macroscopic entitles when one apprehends or instantiates a perceptual object in one's world-simulation - a simulation that runs at around 1013 quantum-coherent frames per second.

First, however, let's review Strawsonian physicalism, without which a solution to the Hard Problem of consciousness can't even get off the ground.

4.1. Pan-experientialism / Strawsonian Physicalism.

Physicalism and materialism are often supposed to be close cousins. But this needn't be the case. On the contrary, one may be both a physicalist and a panpsychist - or even both a physicalist and a monistic idealist. Strawsonian physicalists acknowledge the world is exhaustively described by the equations of physics. There is no "element of reality", as Einstein put it, that is not captured in the formalism of theoretical physics - the quantum-field theoretic equations and their solutions. However, physics gives us no insight into the intrinsic nature of the stuff of the world - what "breathes fire into the equations" as arch-materialist Steven Hawking poetically lamented. Key terms in theoretical physics like "field" are defined purely mathematically.So is the intrinsic nature of the physical, the "fire" in the equations, a wholly metaphysical question? Kant claimed famously that we would never understand the noumenal essence of the world, simply phenomena as structured by the mind. Strawson, drawing upon arguments made by Oxford philosopher Michael Lockwood but anticipated by Russell and Schopenhauer, turns Kant on his head. Actually, there is one part of the natural world that we do know as it is in itself, and not at one remove, so to speak - and its intrinsic nature is disclosed by subjective properties of one's own conscious mind. Thus it transpires that the "fire" in the equations is utterly different from what one's naive materialist intuitions would suppose.

Yet this conjecture still doesn't close the Explanatory Gap.

4.2. The Binding Problem.

Are Phenomenal Minds A Classical Or A Quantum Phenomenon?

Why enter the quantum mind swamp? After all, if one is bold [or foolish] enough to entertain pan-experientialism / Strawsonian physicalism, then why be sceptical about the prospect of non-trivial digital sentience, let alone full-spectrum AGI? Well, counterintuitively, an ontology of pan-experientialism / Strawsonian physicalism does not overpopulate the world with phenomenal minds. For on pain of animism, mere aggregates of discrete classical "psychons", primitive flecks of consciousness, are not themselves unitary subjects of experience, regardless of any information-processing role they may have been co-opted into playing in the CNS. We still need to solve the Binding Problem - and with it, perhaps, the answer to Moravec's paradox. Thus a nonsentient digital computer can today be programmed to develop powerful and exact models of the physical universe. These models can be used to make predictions with superhuman speed and accuracy about everything from the weather to thermonuclear reactions to the early Big Bang. But in other respects, digital computers are just tools and toys. To resolve Moravec's paradox, we need to explain why in unstructured, open-field contexts a bumble-bee can comprehensively outclass Alpha Dog. And in the case of humans, how can 80 billion odd interconnected neurons, conceived as discrete, membrane-bound, spatially distributed classical information processors, generate unitary phenomenal objects, unitary phenomenal world-simulations populated by multiple dynamic objects in real time, and a fleetingly unitary self that can act flexibly and intelligently in a fast-changing local environment? This combination problem was what troubled William James, the American philosopher and psychologist otherwise sympathetic to panpsychism, over a hundred a years ago in Principles of Psychology (1890). In contemporary idiom, even if fields (superstrings, p-branes, etc) of microqualia are the stuff of the world whose behaviour the formalism of physics exhaustively describes, and even if membrane-bound quasi-classical neurons are at least rudimentarily conscious, then why aren't we merely massively parallel informational patterns of classical "mind dust" - quasi-zombies as it were, with no more ontological integrity than the population of China? The Explanatory Gap is unbridgeable as posed. Our phenomenology of mind seems as inexplicable as if 1.3 billion skull-bound Chinese were to hold hands and suddenly become a unitary subject of experience. Why? How?Or rather, where have we gone wrong?

4.3. Why The Mind Is Probably A Quantum Computer.

Here we enter the realm of speculation - though critically, speculation that will be scientifically testable with tomorrow's technology. For now, critics will pardonably view such speculation as no more than the empty hope that two unrelated mysteries, namely the interpretation of quantum mechanics and an understanding of consciousness, will somehow cancel each other out. But what's at stake is whether two apparently irreducible kinds of holism, i.e. "bound" perceptual objects / unitary selves and quantum-coherent states of matter, are more than merely coincidental: a much tighter explanatory fit than a mere congruence of disparate mysteries. Thus consider Max Tegmark's much-cited critique of quantum mind. For the sake of argument, assume that pan-experientialism / Strawsonian physicalism is true but Tegmark rather than his critics is correct: thermally-induced decoherence effectively "destroys" [i.e. transfers to the extra-neural environment in a thermodynamically irreversible way] distinctively quantum-mechanical coherence in an environment as warm and noisy as the brain within around 10-15 of a second - rather than the much longer times claimed by Hameroff et al. Granted pan-experientialism / Strawsonian physicalism, what might it feel like "from the inside" to instantiate a quantum computer running at 10-15 irreducible quantum-coherent frames per second - computationally optimised by hundreds of millions of years of evolution to deliver effectively real-time simulations of macroscopic worlds? How would instantiating this ultrarapid succession of neuronal superpositions be sensed differently from the persistence of vision undergone when watching a movie? No, this conjecture isn't a claim that visual perception of mind-independent objects operates on sub-femtosecond timescales. This patently isn't the case. Nerve impulses travel up the optic nerve to the mind-brain only at a sluggish 100 m/s or so. Rather when we're awake, input from the optic nerve selects mind-brain virtual world states. Even when we're not dreaming, our minds never actually perceive our surroundings. The terms "observation" and "perception" are systematically misleading. "Observation" suggests that our minds access our local environment, whereas all these surroundings can do is play a distal causal role in selecting from a menu of quantum-coherent states of one's own mind: neuronal superpositions of distributed feature-processors. Our awake world-simulations track gross fitness-relevant patterns in the local environment with a delay of 150 milliseconds or so; when we're dreaming, such state-selection (via optic nerve impulses, etc.) of is largely absent.In default of experimental apparatus sufficiently sensitive to detect macroscopic quantum coherence in the CNS on sub-femtosecond timescales, this proposed strategy to bridge the Explanatory Gap is of course only conjecture. Or rather it's little more than philosophical hand-waving. Most AI theorists assume that at such a fine-grained level of temporal resolution our advanced neuroscanners would just find "noise" - insofar as mainstream researchers consider quantum mind hypotheses at all. Moreover, an adequate theory of mind would need rigorously to derive the properties of our bound macroqualia from superpositions of the (hypothetical) underlying field-theoretic microqualia posited by Strawsonian physicalism - not simply hint at how our bound macroqualia might be derivable. But if the story above is even remotely on the right lines, then a classical digital computer - or the population of China (etc) - could never be non-trivially conscious or endowed with a mind of its own.

True or false, it's worth noting that if quantum mechanics is complete, then the existence of macroscopic quantum coherent states in the CNS is not in question: the existence of macroscopic superpositions is a prediction of any realist theory of quantum mechanics that doesn't invoke state vector collapse. Recall Schrödinger's unfortunate cat. Rather what's in question is whether such states could have been recruited via natural selection to do any computationally useful work. Max Tegmark ["Why the brain is probably not a quantum computer"], for instance, would claim otherwise. To date, much of the debate has focused on decoherence timescales, allegedly too rapid for any quantum mind account to fly. And of course classical serial digital computers too are quantum systems, vulnerable to quantum noise: this doesn't make them quantum computers. But this isn't the claim at issue here. Rather it's that future molecular matter-wave interferometry sensitive enough to detect quantum coherence in a macroscopic mind-brain on sub-femtosecond timescales would detect, not merely random psychotic "noise", but quantum coherent states - states isomorphic to the macroqualia / dynamic objects making up the egocentric virtual worlds of our daily experience.

To highlight the nature of this prediction, let's lapse briefly into the idiom of a naive realist theory of perception. Recall how inspecting the surgically exposed brain of an awake subject on an operating table uncovers no qualia, no bound perceptual objects, no unity of consciousness, no egocentric world-simulations, just cheesy convoluted neural porridge - or, under a microscope, discrete classical nerve cells. Hence the incredible eliminativism about consciousness of Daniel Dennett. On a materialist ontology, consciousness is indeed impossible. But if a quantum mind story of phenomenal object-binding is correct, the formal shadows of the macroscopic phenomenal objects of one's everyday lifeworld could one day be experimentally detected with utopian neuroscanning. They are just as physically real as the long-acting macroscopic quantum coherence manifested by, say, superfluid helium at distinctly chillier temperatures. Phenomenal sunsets, symphonies and skyscrapers in the CNS could all in principle be detectable over intervals that are fabulously long measured in units of the world's natural Planck scale, even if fabulously short by the naive intuitions of folk psychology. Without such bound quantum-coherent states, according to this hypothesis, we would be zombies. Given Strawsonian physicalism, the existence of such states explains why biological robots couldn't be insentient automata. On this story, the spell of a false ontology [i.e. materialism] and a residual naive realism about perception allied to classical physics leads us to misunderstand the nature of the awake / dreaming mind-brain as some kind of quasi-classical object. The phenomenology of our minds shows it's nothing of the kind.

4.4. The Incoherence Of Digital Minds.

Most relevant here, another strong prediction of the quantum mind conjecture is that even utopian classical digital computers - or classically parallel connectionist systems - will never be non-trivially conscious, nor will they ever achieve full-spectrum superintelligence. Assuming Strawsonian physicalism is true, even if molecular matter-wave interferometry could detect the "noise" of fleeting macroscopic superpositions internal to the CPU of a classical computer, we've no grounds for believing that a digital computer [or any particular software program it executes] can be a subject of experience. Their fundamental physical components may [or may not] be discrete atomic microqualia rather than the insentient silicon (etc.) atoms we normally suppose. But their physical constitution is computationally incidental to execution of the sequence of logical operations they execute. Any distinctively quantum mechanical effects are just another kind of "noise" against which we design error-detection and -correction algorithms. So at least on the narrative outlined here, the future belongs to sentient, recursively self-improving biological robots synergistically augmented by smarter digital software, not our supporting cast of silicon zombies.On the other hand, we aren't entitled to make the stronger claim that only an organic mind-brain could be a unitary subject of experience. For we simply don't know what may or may not be technically feasible in a distant era of mature nonbiological quantum computing centuries or millennia hence. However, a supercivilisation based on mature nonbiological quantum computing is not imminent.

4.5. The Infeasibility Of "Mind Uploading".

On the face of it, the prospect of scanning, digitising and uploading our minds offers a way to circumvent our profound ignorance of both the Hard Problem of consciousness and the binding problem. Mind uploading would still critically depend on identifying which features of the mind-brain are mere "substrate", i.e. incidental implementation details of our minds, and which features are functionally essential to object-binding and unitary consciousness. On any coarse-grained functionalist story, at least, this challenge might seem surmountable. Presumably the mind-brain can formally be described by the connection and activation evolution equations of a massively parallel connectionist architecture, with phenomenal object-binding a function of simultaneity: different populations of neurons (edge-detectors, colour detectors, motion detectors, etc) firing together to create ephemeral bound objects. But this can't be the full story. Mere simultaneity of neuronal spiking can't, by itself, explain phenomenal object-binding. There is no one place in the brain where distributively processed features come together into multiple bound objects in a world-simulation instantiated by a fleetingly unitary subject of experience. We haven't explained why a population of 80 billion ostensibly discrete membrane-bound neurons, classically conceived, isn't a zombie in the sense that 1.3 billion skull-bound Chinese minds or a termite colony is a zombie. In default of a currently unimaginable scientific / philosophical breakthrough in the understanding of consciousness, it's hard to see how our "mind-files" could ever be uploaded to a digital computer. If a quantum mind story is true, mind-uploading can't be done.In essence, two distinct questions arise here. First, given finite, real-world computational resources, can a classical serial digital computer - or a massively (classically) parallel connectionist system - faithfully emulate the external behaviour of a biological mind-brain?

Second, can a classical digital computer emulate the intrinsic phenomenology of our minds, not least multiple bound perceptual objects simultaneously populating a unitary experiential field apprehended or instantiated by a [fleetingly] unitary self?If our answer to the first question were "yes", then not to answer "yes" to the second question too might seem to be sterile philosophical scepticism - just a rehash of the Problem Of Other Minds, or the idle sceptical worry about inverted qualia: how can I know that when I see red that you don't see blue? (etc). But the problem is much more serious. Compare how, if you are given the notation of a game of chess that Kasparov has just played, then you can faithfully emulate the gameplay. Yet you know nothing whatsoever about the texture of the pieces - or indeed whether the pieces had any textures at all: perhaps the game was played online. Likewise with the innumerable textures of consciousness - with the critical difference that the textures of consciousness are the only reason our "gameplay" actually matters. Unless we rigorously understand consciousness, and the basis of our teeming multitude of qualia, and how those qualia are bound to constitute a subject of experience, the prospect of uploading is a pipedream. Furthermore, we may suspect on theoretical grounds that the full functionality of unitary conscious minds will prove resistant to digital emulation; and classical digital computers will never be anything but zombies.

4.6. Object-Binding, World-Simulations and Phenomenal Selves.

How can one know about anything beyond the contents of one's own mind or software program? The bedrock of general (super)intelligence is the capacity to execute a data-driven simulation of the mind-independent world in open-field contexts, i.e. to "perceive" the fast-changing local environment in almost real time. Without this real-time computing capacity, we would just be windowless monads. For sure, simple forms of behaviour-based robotics are feasible, notably the subsumption architecture of Rodney Brooks and his colleagues at MIT. Quasi-autonomous "bio-inspired" reactive robots can be surprisingly robust and versatile in well-defined environmental contexts. Some radical dynamical systems theorists believe that we can dispense with anything resembling transparent and "projectible" representations in the CNS altogether, and instead model the mind-brain using differential equations. But an agent without any functional capacity for data-driven real-time world-simulation couldn't even take an IQ test, let alone act intelligently in the world.So the design of artificial intelligent lifeforms with a capacity efficiently to run egocentric world-simulations in unstructured, open-field contexts will entail confronting Moravec's paradox. In the post-Turing era, why is engineering the preconditions for allegedly low-level sensorimotor competence in robotics so hard, and programming the allegedly high-level logico-mathematical prowess in computer science so easy - the opposite evolutionary trajectory to organic robots over the past 540 million years? Solving Moravec's paradox in turn will entail solving the binding problem. And we don't understand how the human mind/brain solves the binding problem - despite the speculations about macroscopic quantum coherence in organic neural networks floated above. Presumably, some kind of massively parallel sub-symbolic connectionist architecture with exceedingly powerful learning algorithms is essential to world-simulation. Yet mere temporal synchrony of neuronal firing patterns of discrete, distributed classical neurons couldn't suffice to generate a phenomenal world instantiated by a person. Nor could programs executed in classical serial processors.

How is this naively "low-level" sensorimotor question relevant to the end of the human era? Why would a hypothetical nonfriendly AGI-in-a-box need to solve the binding problem and continually simulate / "perceive" the external world in real time in order to pose (potentially) an existential threat to biological sentience? This is the spectre that MIRI seek to warn the world against should humanity fail to develop Safe AI. Well, just as there is nothing to stop someone who, say, doesn't like "Jewish physics" from gunning down a cloistered (super-)Einstein in his study, likewise there is nothing to stop a simple-minded organic human in basement reality switching the computer that's hosting (super-)Watson off at the mains if he decides he doesn't like computers - or the prospect of human replacement by nonfriendly super-AGI. To pose a potential existential threat to Darwinian life, the putative super-AGI would need to possess ubiquitous global surveillance and control capabilities so it could monitor and defeat the actions of ontologically low-level mindful agents - and persuade them in real time to protect its power-source. The super-AGI can't simply infer, predict and anticipate these actions in virtue of its ultrapowerful algorithms: the problem is computationally intractable. Living in the basement, as disclosed by the existence of one's own unitary phenomenal mind, has ontological privileges. It's down in the ontological basement that the worst threats to sentient beings are to be found - threats emanating from other grim basement-dwellers evolved under pressure of natural selection. For the single greatest underlying threat to human civilisation still lies, not in rogue software-based AGI going FOOM and taking over the world, but in the hostile behaviour of other male human primates doing what Nature "designed" us to do, namely wage war against other male primates using whatever tools are at our disposal. Evolutionary psychology suggests, and the historical record confirms, that the natural behavioural phenotype of humans resembles chimpanzees rather than bonobos. Weaponised Tool AI is the latest and potentially greatest weapon male human primates can use against other coalitions of male human primates. Yet we don't know how to give that classical digital AI a mind of its own - or whether such autonomous minds are even in principle physically constructible.

5.0. CONCLUSION

The Qualia Explosion.

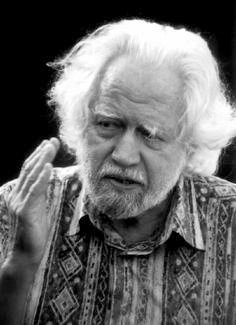

Supersentience: Turing plus Shulgin?

Compared to the natural sciences (cf. the Standard Model in physics) or computing (cf. the Universal Turing Machine), the "science" of consciousness is pre-Galilean, perhaps even pre-Socratic. State-enforced censorship of the range of subjective properties of matter and energy in the guise of a prohibition on psychoactive experimentation is a powerful barrier to knowledge. The legal taboo on the empirical method in consciousness studies prevents experimental investigation of even the crude dimensions of the Hard Problem, let alone locating a solution-space where answers to our ignorance might conceivably be found.Singularity theorists are undaunted by our ignorance of this fundamental feature of the natural world. Instead, the Singularitarians offer a narrative of runaway machine intelligence in which consciousness plays a supporting role ranging from the minimal and incidental to the completely non-existent. However, highlighting the Singularity movement's background assumptions about the nature of mind and intelligence, not least the insignificance of the binding problem to AGI, reveals why FUSION and REPLACEMENT scenarios are unlikely - though a measure of "cyborgification" of sentient biological robots augmented with ultrasmart software seems plausible and perhaps inevitable.

If full-spectrum superintelligence does indeed entail navigation and mastery of the manifold state-spaces of consciousness, and ultimately a seamless integration of this knowledge with the structural understanding of the world yielded by the formal sciences, then where does this elusive synthesis leave the prospects of posthuman superintelligence? Will the global proscription of radically altered states last indefinitely?

Social prophecy is always a minefield. However, there is one solution to the indisputable psychological health risks posed to human minds by empirical research into the outlandish state-spaces of consciousness unlocked by ingesting the tryptamines, phenylethylamines, isoquinolines and other pharmacological tools of sentience investigation. This solution is to make "bad trips" physiologically impossible - whether for individual investigators or, in theory, for human society as a whole. Critics of mood-enrichment technologies sometimes contend that a world animated by information-sensitive gradients of bliss would be an intellectually stagnant society: crudely, a Brave New World. On the contrary, biotech-driven mastery of our reward circuitry promises a knowledge explosion in virtue of allowing a social, scientific and legal revolution: safe, full-spectrum biological superintelligence. For genetic recalibration of hedonic set-points - as distinct from creating uniform bliss - potentially leaves cognitive function and critical insight both sharp and intact; and offers a launchpad for consciousness research in mind-spaces alien to the drug-naive imagination. A future biology of invincible well-being would not merely immeasurably improve our subjective quality of life: empirically, pleasure is the engine of value-creation. In addition to enriching all our lives, radical mood-enrichment would permit safe, systematic and responsible scientific exploration of previously inaccessible state-spaces of consciousness. If we were blessed with a biology of invincible well-being, exotic state-spaces would all be saturated with a rich hedonic tone.

Until this hypothetical world-defining transition, pursuit of the rigorous first-person methodology and rational drug-design strategy pioneered by Alexander Shulgin in PiHKAL and TiHKAL remains confined to the scientific counterculture. Investigation is risky, mostly unlawful, and unsystematic. In mainstream society, academia and peer-reviewed scholarly journals alike, ordinary waking consciousness is assumed to define the gold standard in which knowledge-claims are expressed and appraised. Yet to borrow a homely-sounding quote from Einstein, "What does the fish know of the sea in which it swims?" Just as a dreamer can gain only limited insight into the nature of dreaming consciousness from within a dream, likewise the nature of ordinary waking consciousness can only be glimpsed from within its confines. In order scientifically to understand the realm of the subjective, we'll need to gain access to all its manifestations, not just the impoverished subset of states of consciousness that tended to promote the inclusive fitness of human genes on the African savannah.

5.1. AI, Genome Biohacking and Utopian Superqualia.

Why the Proportionality Thesis Implies an Organic Singularity.

So if the preconditions for full-spectrum superintelligence, i.e. access to superhuman state-spaces of sentience, remain unlawful, where does this roadblock leave the prospects of runaway self-improvement to superintelligence? Could recursive genetic self-editing of our source code repair the gap? Or will traditional human personal genomes be policed by a dystopian Gene Enforcement Agency in a manner analogous to the coercive policing of traditional human minds by the Drug Enforcement Agency?Even in an ideal regulatory regime, the process of genetic and/or pharmacological self-enhancement is intuitively too slow for a biological Intelligence Explosion to be a live option, especially when set against the exponential increase in digital computer processing power and inorganic AI touted by Singularitarians. Prophets of imminent human demise in the face of machine intelligence argue that there can't be a Moore's law for organic robots. Even the Flynn Effect, the three-points-per-decade increase in IQ scores recorded during the 20th century, is comparatively puny; and in any case, this narrowly-defined intelligence gain may now have halted in well-nourished Western populations.

However, writing off all scenarios of recursive human self-enhancement would be premature. Presumably, the smarter our nonbiological AI, the more readily AI-assisted humans will be able recursively to improve our own minds with user-friendly wetware-editing tools - not just editing our raw genetic source code, but also the multiple layers of transcription and feedback mechanisms woven into biological minds. Presumably, our ever-smarter minds will be able to devise progressively more sophisticated, and also progressively more user-friendly, wetware-editing tools. These wetware-editing tools can accelerate our own recursive self-improvement - and manage potential threats from nonfriendly AGI that might harm rather than help us, assuming that our earlier strictures against the possibility of digital software-based unitary minds were mistaken. MIRI rightly call attention to how small enhancements can yield immense cognitive dividends: the relatively short genetic distance between humans and chimpanzees suggests how relatively small enhancements can exert momentous effects on a mind's general intelligence, thereby implying that AGIs might likewise become disproportionately powerful through a small number of tweaks and improvements. In the post-genomic era, presumably exactly the same holds true for AI-assisted humans and transhumans editing their own minds. What David Chalmers calls the proportionality thesis, i.e. increases in intelligence lead to proportionate increases in the capacity to design intelligent systems, will be vindicated as recursively self-improving organic robots modify their own source code and bootstrap our way to full-spectrum superintelligence: in essence, an organic Singularity. And in contrast to classical digital zombies, superficially small molecular differences in biological minds can result in profoundly different state-spaces of sentience. Compare the ostensibly trivial difference in gene expression profiles of neurons mediating phenomenal sight and phenomenal sound - and the radically different visual and auditory worlds they yield.

Compared to FUSION or REPLACEMENT scenarios, the AI-human CO-EVOLUTION conjecture is apt to sound tame. The likelihood our posthuman successors will also be our biological descendants suggests at most a radical conservativism. In reality, a post-Singularity future where today's classical digital zombies were superseded merely by faster, more versatile classical digital zombies would be infinitely duller than a future of full-spectrum supersentience. For all insentient information processors are exactly the same inasmuch as the living dead are not subjects of experience. They'll never even know what it's like to be "all dark inside" - or the computational power of phenomenal object-binding that yields illumination. By contrast, posthuman superintelligence will not just be quantitatively greater but also qualitatively alien to archaic Darwinian minds. Cybernetically enhanced and genetically rewritten biological minds can abolish suffering throughout the living world and banish experience below "hedonic zero" in our forward light-cone, an ethical watershed without precedent. Post-Darwinian life can enjoy gradients of lifelong blissful supersentience with the intensity of a supernova compared to a glow-worm. A zombie, on the other hand, is just a zombie - even if it squawks like an Einstein. Posthuman organic minds will dwell in state-spaces of experience for which archaic humans and classical digital computers alike have no language, no concepts, and no words to describe our ignorance. Most radically, hyperintelligent organic minds will explore state-spaces of consciousness that do not currently play any information-signalling role in living organisms, and are impenetrable to investigation by digital zombies. In short, biological intelligence is on the brink of a recursively self-amplifying Qualia Explosion - a phenomenon of which digital zombies are invincibly ignorant, and invincibly ignorant of their own ignorance. We humans too, of course, are mostly ignorant of what we're lacking: the nature, scope and intensity of such posthuman superqualia are beyond the bounds of archaic human experience. Even so, enrichment of our reward pathways can ensure that full-spectrum biological superintelligence will be sublime.

David Pearce

(2012, last updated 2021)

see too PDF, PPT and The Biointelligence Explosion.

HOME

Talks 2015

BLTC Research

Transhumanism

Superhappiness

Quantum Ethics?

Utopian Surgery?

Our Biotech Future

Social Media (2026)

Utopian Pharmacology

The Abolitionist Project

Reprogramming Predators

The Reproductive Revolution

Kurzweil Accelerating Intelligence

The Future of Biological Intelligence

ChatGPT on The Biointelligence Explosion

Full-Spectrum Superintelligence (2024, pdf)

Quantum Computing: The First 540 Million Years

The Machine Intelligence Research Institute (MIRI)

Technological Singularities and Intelligence Explosions

Monistic physicalism: an experimentally testable conjecture (2015)